TL;DR

|

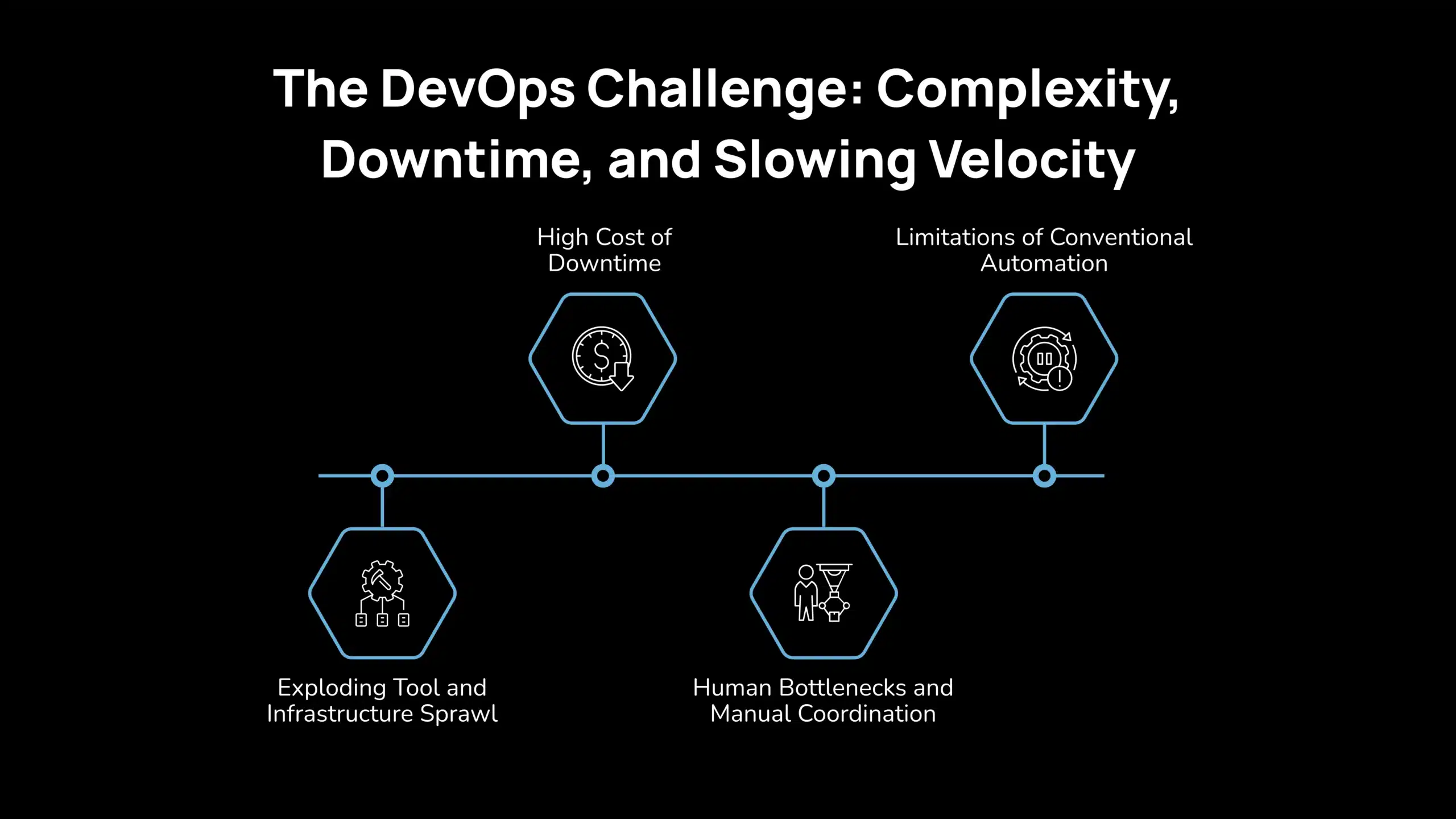

The DevOps Challenge: Complexity, Downtime, and Slowing Velocity

Modern DevOps environments promise speed and scalability, yet many teams find themselves constrained by the very systems meant to accelerate delivery. As infrastructure expands and workflows multiply, operational friction begins to surface in subtle but costly ways.

Exploding Tool and Infrastructure Sprawl

Microservices architectures, multi-cloud deployments, and layered CI/CD pipelines have introduced a level of operational complexity that is difficult to manage at scale. Each service, tool, and environment adds another dependency, another integration point, and another potential failure surface.

Teams often rely on a growing stack of monitoring platforms, deployment tools, and approval workflows. Coordination across these systems rarely happens seamlessly. Instead, work passes through multiple checkpoints, creating fragmented workflows and delayed decision-making. Engineering velocity slows, not because of a lack of automation, but because automation exists in silos rather than as a cohesive system.

High Cost of Downtime

Operational inefficiencies become most visible during outages. Every minute of unplanned downtime carries a measurable financial impact. Industry research shows that high-impact outages can cost large enterprises $2 million per hour, translating to tens of thousands of dollars lost each minute.

Beyond direct revenue loss, downtime erodes customer trust, disrupts service continuity, and places additional strain on engineering teams. Longer resolution times increase the overall impact, making incident response speed a critical performance factor.

Human Bottlenecks and Manual Coordination

Despite advances in automation, a significant portion of engineering time is still consumed by operational overhead. Studies indicate that engineers spend roughly 32 percent of their time on activities such as incident triage, ticket handling, and system monitoring.

Each manual checkpoint, including pull request reviews, escalation approvals, and incident coordination, introduces latency into the delivery cycle. Senior engineers, who should be focused on building and optimizing systems, are often pulled into repetitive operational tasks. Over time, this creates a bottleneck that limits both productivity and innovation.

Limitations of Conventional Automation

CI/CD pipelines and scripted workflows have streamlined routine processes, but they operate within predefined conditions. When unexpected failures occur, these systems lack the contextual awareness required to diagnose and resolve issues independently.

Incident response still depends heavily on human intervention. Engineers must interpret alerts, correlate signals across tools, and execute runbooks under pressure. Even organizations that have adopted AIOps and observability platforms continue to experience longer-than-optimal mean time to resolution (MTTR), especially in complex, distributed environments.

Traditional automation accelerates execution, but it does not eliminate the overhead of decision-making. As systems grow more dynamic, this gap becomes increasingly difficult to ignore.

What Is Agentic AI in DevOps?

Agentic AI in DevOps refers to autonomous AI systems that can monitor, analyze, and act across the software delivery lifecycle. Instead of only executing predefined scripts or responding to alerts, these systems operate with goal-driven intelligence, enabling them to manage workflows, resolve issues, and optimize processes with minimal human intervention.

In a DevOps environment, agentic AI functions as an operational layer that connects observability, automation, and decision-making across tools and infrastructure.

Primary capabilities include:

- Continuous monitoring and context awareness: Analyzes logs, metrics, and system signals in real time

- Autonomous incident response: Detects issues, diagnoses root causes, and executes remediation actions

- Workflow orchestration: Coordinates tasks across CI/CD pipelines, infrastructure, and applications

- Adaptive decision-making: Adjusts actions based on changing system conditions and historical patterns

- Cross-system integration: Interacts with DevOps tools, cloud platforms, and APIs to execute tasks

- Continuous learning loops: Improves performance by learning from past incidents and outcomes

This shifts DevOps from reactive operations to a more proactive and continuously optimized system.

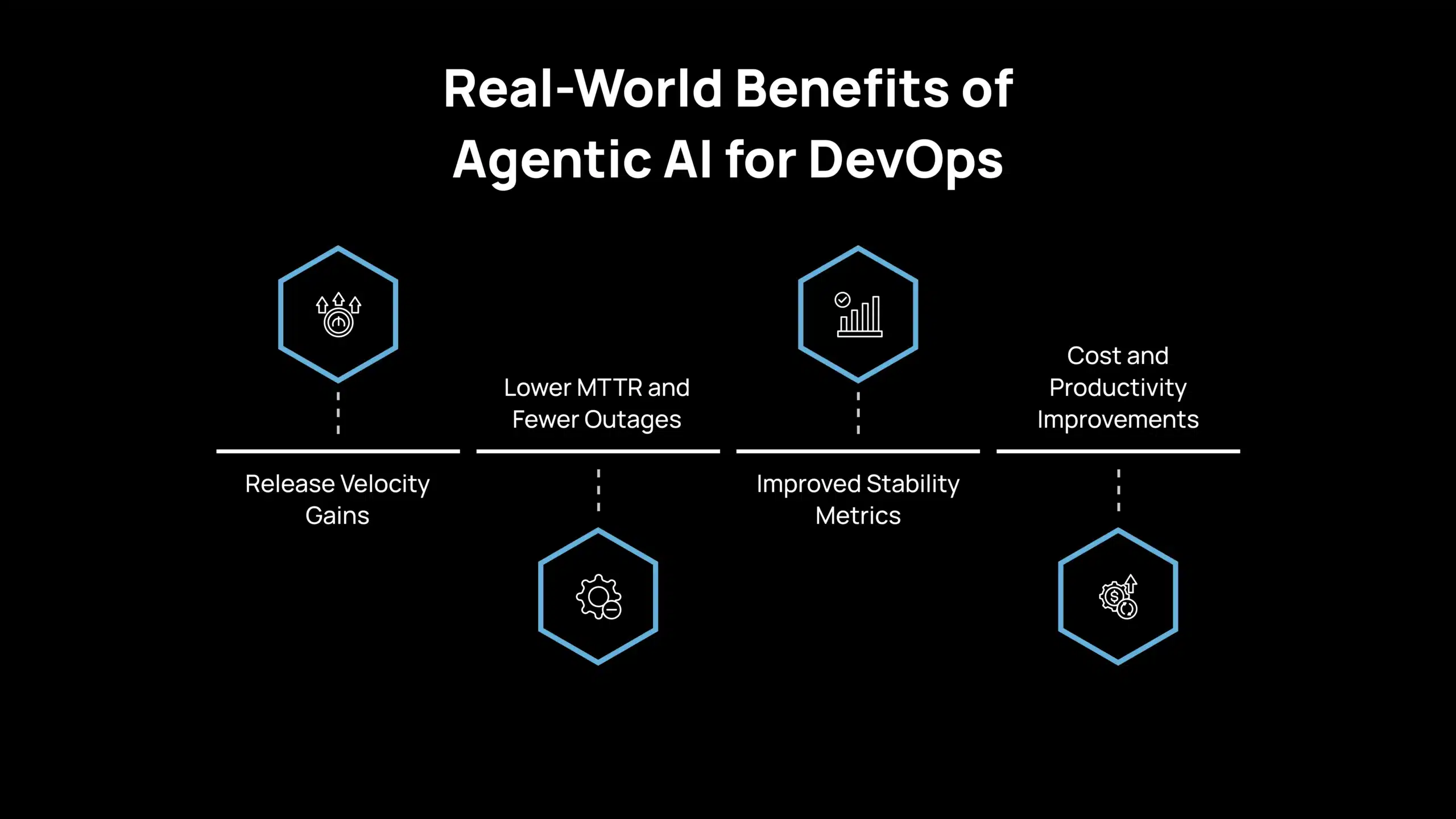

Real-World Benefits of Agentic AI for DevOps

Agentic AI is beginning to show measurable impact across DevOps environments, particularly in areas where speed, reliability, and coordination are critical. Rather than improving isolated tasks, these systems enhance how workflows operate end-to-end.

Release Velocity Gains

Teams are achieving faster delivery cycles as coordination overhead is reduced. By automating approvals, dependency management, and workflow orchestration, agentic systems enable parallel execution across pipelines.

Research from McKinsey indicates that software developers can complete coding tasks up to twice as fast with generative AI, which directly contributes to shorter lead times and faster releases.

Lower MTTR and Fewer Outages

Incident response is one of the most immediate areas of improvement. AI-driven observability and automation platforms can detect anomalies, correlate signals, and initiate remediation faster than manual processes.

Organizations using AI for IT operations have reported up to a 40% reduction in incident resolution time (MTTR).

Improved Stability Metrics

With continuous monitoring and automated response, systems become more resilient. Agentic AI reduces the likelihood of cascading failures by identifying issues earlier and responding before they escalate.

High-performing DevOps teams can achieve significantly lower failure rates and faster recovery times, and AI-driven workflows are increasingly contributing to these outcomes by improving consistency and reducing human error.

Cost and Productivity Improvements

By reducing manual intervention in incident management, deployment coordination, and monitoring, agentic AI allows engineering teams to focus on higher-value work.

McKinsey analyses suggest that organizations adopting AI-driven automation can reduce inventory costs by 20–30%, while also improving developer experience by minimizing repetitive tasks and operational overhead.

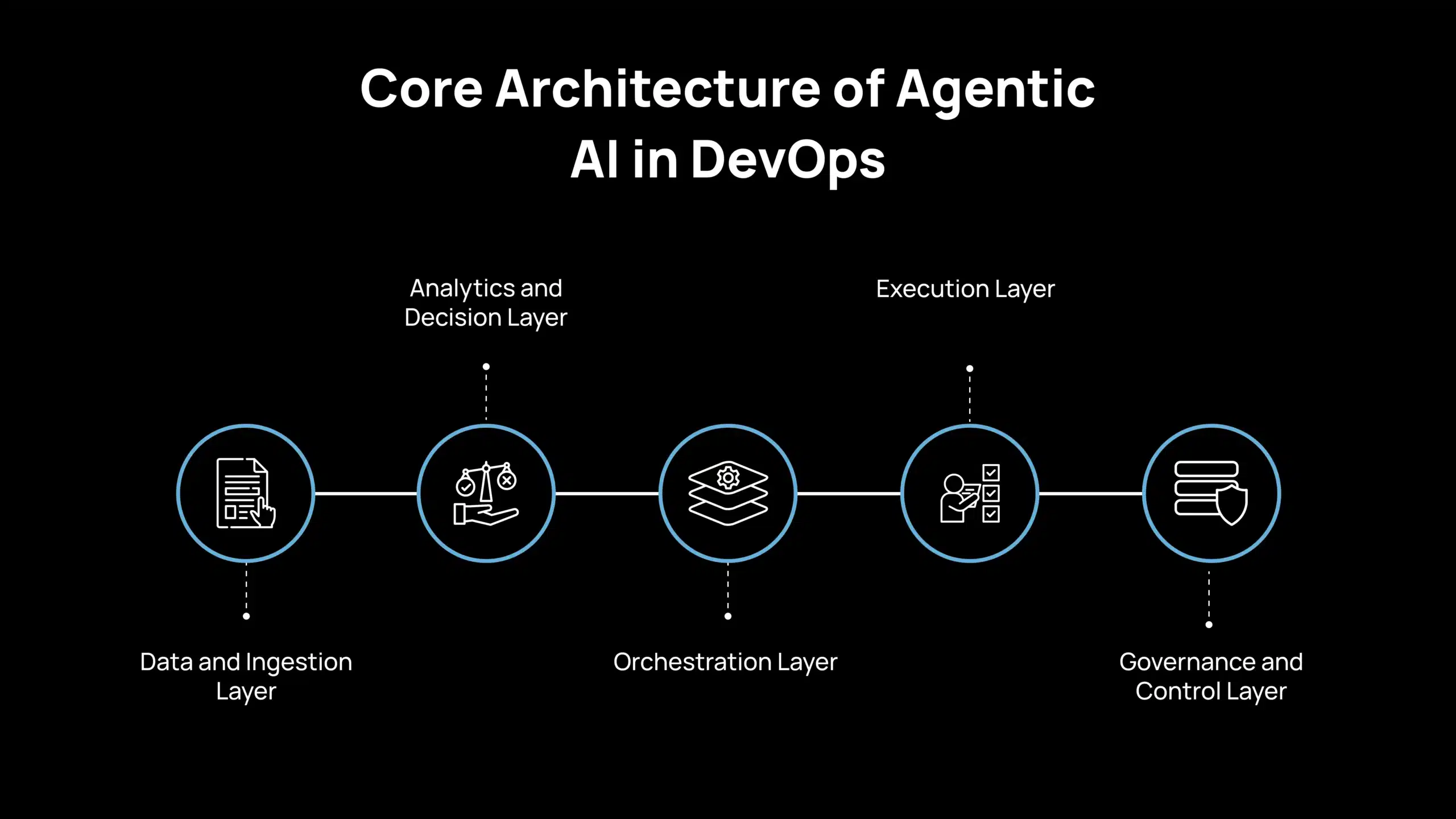

Core Architecture of Agentic AI in DevOps

Agentic AI in DevOps operates through a layered architecture that connects data, decision-making, and execution into a unified system. This structure ensures that agents can act autonomously while remaining aligned with enterprise controls, security policies, and operational standards.

Data and Ingestion Layer

This layer captures real-time signals across the DevOps ecosystem, including logs, metrics, CI/CD events, traces, and configuration changes. Data is aggregated from observability platforms and pipelines, then structured for downstream processing.

Modern implementations often combine observability stacks with vector databases to create contextual memory. This allows agents to retrieve historical incidents, runbooks, and system states, enabling more informed decisions rather than isolated reactions.

Analytics and Decision Layer

At this stage, AI models analyze incoming data to detect anomalies, identify patterns, and determine appropriate actions. Agents apply reasoning techniques such as prompt chaining and contextual inference to evaluate multiple possible responses.

Decision-making is often constrained by policy engines such as Open Policy Agent (OPA), ensuring that any recommended action aligns with predefined rules. For example, before modifying infrastructure, an agent may validate compliance requirements or risk thresholds through policy evaluation.

Orchestration Layer

The orchestration layer coordinates interactions between multiple agents and systems. It ensures that tasks are executed in the correct sequence and that dependencies are managed effectively.

Organizations may adopt centralized orchestration, in which a primary agent coordinates workflows, or distributed models, in which multiple specialized agents collaborate. Common patterns include sequential pipelines, where each agent handles a specific stage, and multi-agent systems that operate in parallel to accelerate execution.

Execution Layer

This layer connects agent decisions to real-world actions. Agents interact directly with DevOps tools and environments through secure interfaces.

Typical actions include triggering CI/CD pipelines, executing infrastructure commands through tools such as Kubernetes or cloud CLIs, updating incident tickets, or generating pull requests for code changes. Every action is recorded in an audit trail to ensure traceability and compliance.

Governance and Control Layer

Governance ensures that autonomous systems operate within defined boundaries. This layer manages access control, policy enforcement, and system oversight.

Capabilities include role-based permissions, approval workflows, and explainability mechanisms. Advanced implementations may incorporate constraint-based decision frameworks that prevent unsafe actions. Immutable logs allow organizations to trace every action taken by an agent, which is critical for audits and post-incident analysis.

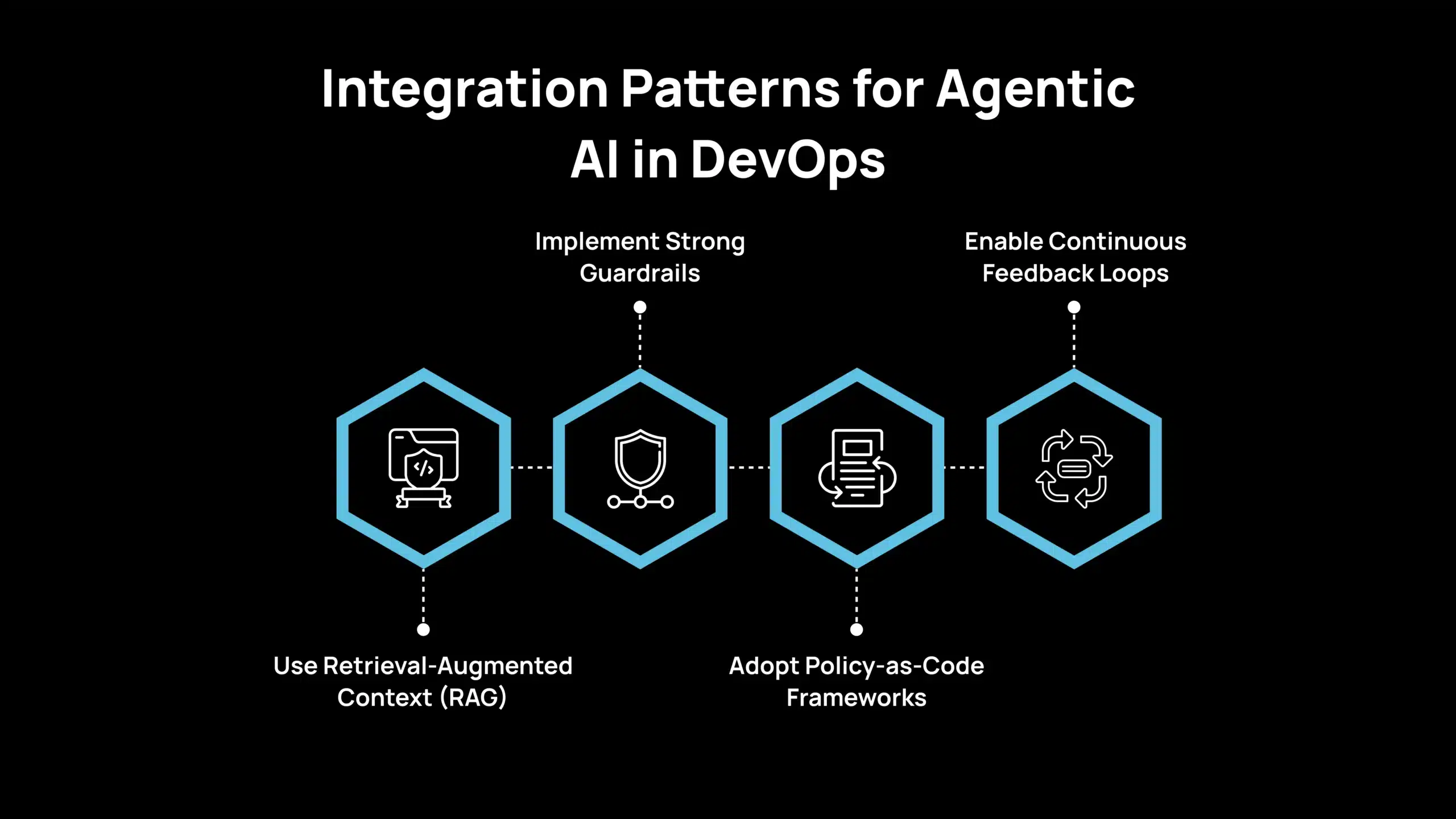

Integration Patterns for Agentic AI in DevOps

Once the architecture is in place, the real impact comes from how these systems connect and operate within your existing DevOps ecosystem. Integration patterns define how agents access context, enforce controls, and execute actions reliably across tools and workflows.

Use Retrieval-Augmented Context (RAG)

Agents perform more effectively when they can access relevant historical and operational context. Integrating retrieval mechanisms allows agents to query knowledge bases that include runbooks, architecture diagrams, and previous incident resolutions. This enables more accurate diagnosis and decision-making.

Implement Strong Guardrails

Each agent should operate within clearly defined access boundaries. Role-based permissions ensure that agents only perform actions relevant to their function. For example, a diagnostic agent may have read-only access to system configurations, while a remediation agent can execute changes within controlled environments.

Adopt Policy-as-Code Frameworks

Policy engines should be embedded within the decision workflow. Before executing any action, agents validate plans against compliance rules, security requirements, and operational constraints. This reduces risk while maintaining the speed of automation.

Enable Continuous Feedback Loops

Every deployment, incident, and remediation action should feed back into the system. By capturing outcomes and learning from both successful and unsuccessful actions, organizations can continuously improve model performance and decision accuracy.

A well-implemented architecture does more than automate tasks. It creates a system in which data, intelligence, and execution work together, enabling DevOps teams to move from reactive incident handling to proactive, continuously optimized operations.

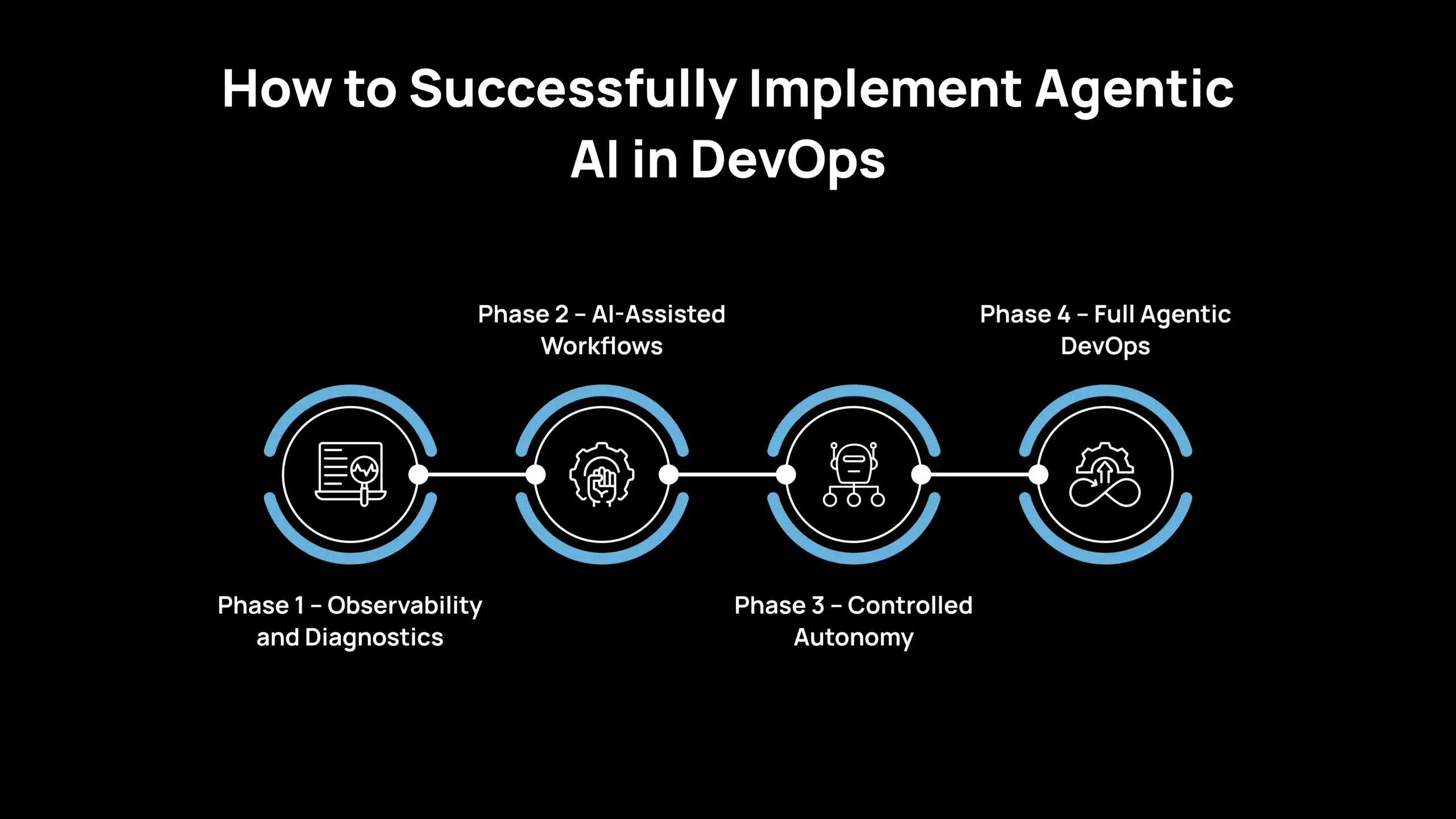

How to Successfully Implement Agentic AI in DevOps

Adopting agentic AI in DevOps requires a phased approach that balances innovation with control. Organizations that move too quickly toward full autonomy often face governance and reliability challenges, while those that move too slowly fail to realize meaningful impact. A structured roadmap allows teams to build trust, validate outcomes, and scale capabilities in a controlled manner.

Phase 1 – Observability and Diagnostics

The first step is establishing a strong observability foundation. Organizations deploy AIOps and analytics platforms to gain visibility into system behavior, incident patterns, and operational bottlenecks.

At this stage, AI is primarily used for signal correlation and root-cause analysis. Instead of relying on isolated alerts, systems begin identifying relationships across logs, metrics, and traces. This reduces noise and helps teams understand failure patterns more effectively.

Baseline metrics are critical. Teams should track DevOps performance indicators such as mean time to detection (MTTD), mean time to resolution (MTTR), deployment frequency, and lead time for changes. These metrics serve as a reference point for measuring the impact of agentic systems in later stages.

Phase 2 – AI-Assisted Workflows

Once visibility is established, organizations introduce AI agents as assistants rather than decision-makers. The focus is on augmenting human workflows, not replacing them.

Common implementations include AI-powered pull request reviews, automated incident prioritization, and chat-based assistants that gather logs or suggest remediation steps for on-call engineers. These systems reduce manual effort while keeping humans in the loop for all critical decisions.

Human approval remains mandatory for execution. This phase is essential for building trust in AI systems, refining decision logic, and identifying gaps in data or workflows before moving toward autonomy.

Phase 3 – Controlled Autonomy

In this stage, agents begin executing low-risk actions under defined constraints. Organizations allow AI systems to handle routine operational tasks such as restarting services, scaling infrastructure, or resolving known failure patterns.

Risk is managed through controlled deployment strategies such as feature flags, canary releases, or blue-green environments. These mechanisms limit the impact of incorrect actions while allowing systems to learn and improve.

Performance tracking becomes more important. Organizations monitor decision accuracy, response times, and system outcomes to ensure that autonomous actions align with expected results. In mature implementations, AI-driven systems have demonstrated decision accuracy levels exceeding 90 percent in well-defined scenarios, enabling the gradual expansion of autonomy.

Phase 4 – Full Agentic DevOps

At this stage, agentic systems operate as an integrated layer across the DevOps lifecycle. Agents can coordinate end-to-end workflows, including pipeline execution, infrastructure management, compliance validation, and incident response.

Routine decisions, such as approving standard changes or triggering deployment strategies, can be handled autonomously. During high-pressure scenarios, such as outages, agents can execute predefined recovery strategies without waiting for manual intervention.

The role of engineering teams has evolved significantly. Instead of managing day-to-day operations, engineers focus on defining policies, optimizing system behavior, and overseeing AI-driven workflows. This transition shifts DevOps from execution-heavy processes to system-level orchestration and governance.

Governance remains critical across all phases. Organizations must ensure that autonomous systems operate within defined boundaries and remain auditable.

Every agent action should be logged through an immutable audit trail, enabling full traceability for compliance and post-incident analysis. Clear approval workflows and escalation paths must be defined, even in highly autonomous environments.

Change management processes should also evolve to incorporate AI-driven workflows. Incident response frameworks need to include AI-assisted steps, along with clearly defined points where human intervention is required.

A well-structured governance model ensures that organizations can scale agentic AI safely while maintaining control, accountability, and operational reliability.

Metrics That Define Agentic DevOps Performance

Measuring the impact of agentic AI in DevOps requires a shift from isolated productivity metrics to system-level performance indicators. Organizations need to evaluate how AI influences speed, reliability, and operational efficiency across the entire delivery lifecycle.

- Lead time for changes: Reduction in time between code commit and production deployment, indicating faster release cycles

- Deployment frequency: Increase in the number of deployments, reflecting improved delivery velocity

- Mean time to detection (MTTD): Faster identification of incidents through continuous monitoring and anomaly detection

- Mean time to resolution (MTTR): Reduction in incident resolution time, often improved with AI-driven operations

- Change failure rate: Decrease in failed deployments due to better testing, validation, and automated rollback strategies

- System uptime and reliability: Improvements in availability metrics, supported by proactive monitoring and remediation

- Alert noise reduction: Fewer redundant alerts through AI-based correlation and prioritization

- Engineering productivity: More time spent on development versus operational overhead, supported by automation of repetitive tasks

Tracking these metrics allows organizations to quantify the value of agentic AI and ensure that automation improvements translate into measurable business outcomes.

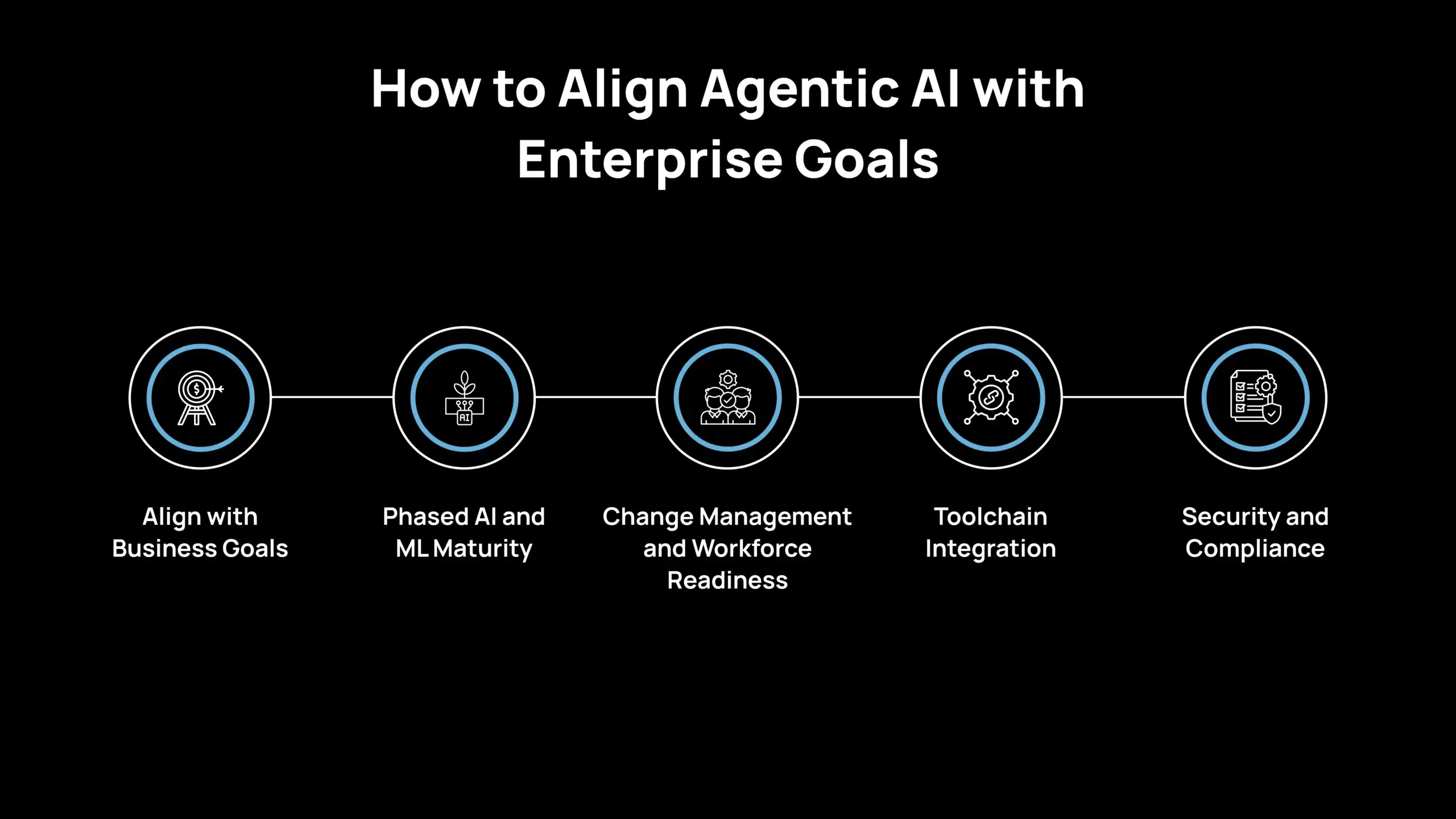

How to Align Agentic AI with Enterprise Goals

Adopting agentic AI in DevOps is not only a technology shift. It requires alignment with business objectives, operational maturity, and governance frameworks to ensure long-term value.

Align with Business Goals

Agentic DevOps should be positioned as a strategic investment in enterprise resilience and agility. Improvements in deployment velocity, system uptime, and incident response must be tied directly to business outcomes such as revenue continuity, customer retention, and service reliability. Enterprise guidance from Gartner emphasizes focusing on measurable productivity and operational gains rather than adopting AI as a standalone initiative.

Phased AI and ML Maturity

Organizations typically evolve through stages of AI adoption, beginning with focused use cases such as code generation or analytics before progressing to workflow orchestration. Agentic AI should extend existing DevOps and platform engineering efforts, enabling coordination across systems rather than replacing established processes. A phased approach ensures stability, reduces risk, and allows teams to validate outcomes before scaling autonomy.

Change Management and Workforce Readiness

Successful implementation depends on organizational readiness. Engineering teams must adapt to roles that emphasize oversight, policy definition, and system optimization rather than manual execution. Upskilling in AI governance, observability, and system monitoring becomes essential. Clear KPIs and visible improvements, such as reduced incidents or faster release cycles, help drive adoption and build confidence across teams.

Toolchain Integration

Agentic AI requires seamless integration across the DevOps ecosystem. Systems such as source control, CI/CD pipelines, infrastructure platforms, and ticketing tools must provide reliable APIs and consistent data access. A unified platform or internal developer portal can serve as the operational backbone, allowing agents to interact with systems, retrieve context, and execute workflows without friction.

Security and Compliance

Autonomous systems must operate within strict security and compliance boundaries. Policy-as-code frameworks ensure that every action aligns with organizational standards and regulatory requirements. Strong access controls, encrypted environments, and comprehensive audit trails are essential. As agents gain execution capabilities, security models must evolve to include continuous validation, monitoring, and oversight of AI-driven actions.

A structured strategy ensures that agentic AI strengthens DevOps practices while maintaining control, accountability, and long-term scalability.

How Avahi Helps You Turn AI Into Real Business Results?

If your goal is to apply AI in practical ways that deliver measurable business impact, Avahi offers solutions designed specifically for real-world challenges. Avahi enables organizations to quickly and securely adopt advanced AI capabilities, supported by a strong cloud foundation and deep AWS expertise.

Avahi AI solutions deliver business benefits such as:

- Round-the-Clock Customer Engagement

- Automated Lead Capture and Call Management

- Faster Content Creation

- Quick Conversion of Documents Into Usable Data

- Smarter Planning Through Predictive Insights

- Deeper Understanding of Visual Content

- Effortless Data Access Through Natural Language Queries

- Built-In Data Protection and Regulatory Compliance

- Seamless Global Communication Through Advanced Translation and Localization

By partnering with Avahi, organizations gain access to a team with extensive AI and cloud experience committed to delivering tailored solutions. The focus remains on measurable outcomes, from automation that saves time and reduces costs to analytics that improve strategic decision-making to AI-driven interactions that elevate the customer experience.

Discover Avahi’s AI Platform in Action

At Avahi, we empower businesses to deploy advanced Generative AI that streamlines operations, enhances decision-making, and accelerates innovation, all with zero complexity.

As your trusted AWS Cloud Consulting Partner, we empower organizations to harness the full potential of AI while ensuring security, scalability, and compliance with industry-leading cloud solutions.

Our AI Solutions Include

- AI Adoption & Integration – Leverage Amazon Bedrock and GenAI to Enhance Automation and Decision-Making.

- Custom AI Development – Build intelligent applications tailored to your business needs.

- AI Model Optimization – Seamlessly switch between AI models with automated cost, accuracy, and performance comparisons.

- AI Automation – Automate repetitive tasks and free up time for strategic growth.

- Advanced Security & AI Governance – Ensure compliance, detect fraud, and deploy secure models.

Want to unlock the power of AI with enterprise-grade security and efficiency?

Start Your AI Transformation with Avahi Today!

FAQ

What is Agentic AI in a DevOps context?

Agentic AI refers to AI systems composed of autonomous agents that can observe DevOps environments, make contextual decisions (e.g., during incidents or deployments), and take actions across tools. Unlike scripts, these agents can adapt and coordinate end-to-end tasks.

How does Agentic DevOps differ from traditional AIOps?

AIOps platforms analyze data and often alert humans. Agentic DevOps goes further: it uses AI agents to act on insights. For example, an agent can automatically trigger a rollback or scale infrastructure based on learned thresholds, rather than waiting for manual intervention.

Will agents replace DevOps engineers?

No. Agents handle routine monitoring, troubleshooting, and execution, freeing engineers to focus on design, architecture, and improvement. As Harness notes, humans will shift from writing deployment scripts to designing cognitive architectures and policies.

What metrics should we track when implementing Agentic AI?

Monitor both DevOps and new agentic metrics: standard DORA metrics (deployment frequency, lead time, MTTR) plus agent-specific KPIs like Decision Accuracy (AI vs. human match rate) and Autonomy Coverage (% of tasks handled by agents).

What are the risks of Agentic AI, and how do we mitigate them?

Risks include misconfiguration, security breaches, and over-reliance on AI. Mitigation includes enforcing strict IAM roles, keeping humans in the loop for high-risk changes, and using policy engines to block unsafe commands. Start small and validate agents’ decisions before fully trusting them.

Which DevOps areas benefit most from agents?

Incident response and release orchestration see the biggest wins. Agents excel at 24/7 monitoring, alert correlation, and fast remediation. They also boost release pipelines by auto-merging tested changes and validating compliance in real time, enabling continuous, reliable delivery.